Trustworthy AI is essential for integrating advanced technologies into scientific research. ScienceOS builds this trust by carefully implementing six core principles identified by scientists – ranging from legal compliance and data privacy to transparency and ethical oversight. By tailoring the implementation of these principles to the unique needs of the scientific community and engaging multiple stakeholders at both individual and organizational levels, scienceOS creates a robust foundation for reliable, responsible AI-assisted research. But scienceOS goes further by exploring additional aspects such as dehumanizing AI interactions, balancing trust appropriately, and addressing the gap between trust and actual use.

This article explores how these core principles and extended approaches guide the development and operation of scienceOS to foster trust and promote effective scientific collaboration.

Core principles of trustworthy AI

ScienceOS builds trust by addressing six key aspects identified by researchers as critical for making AI systems credible and reliable for all users.

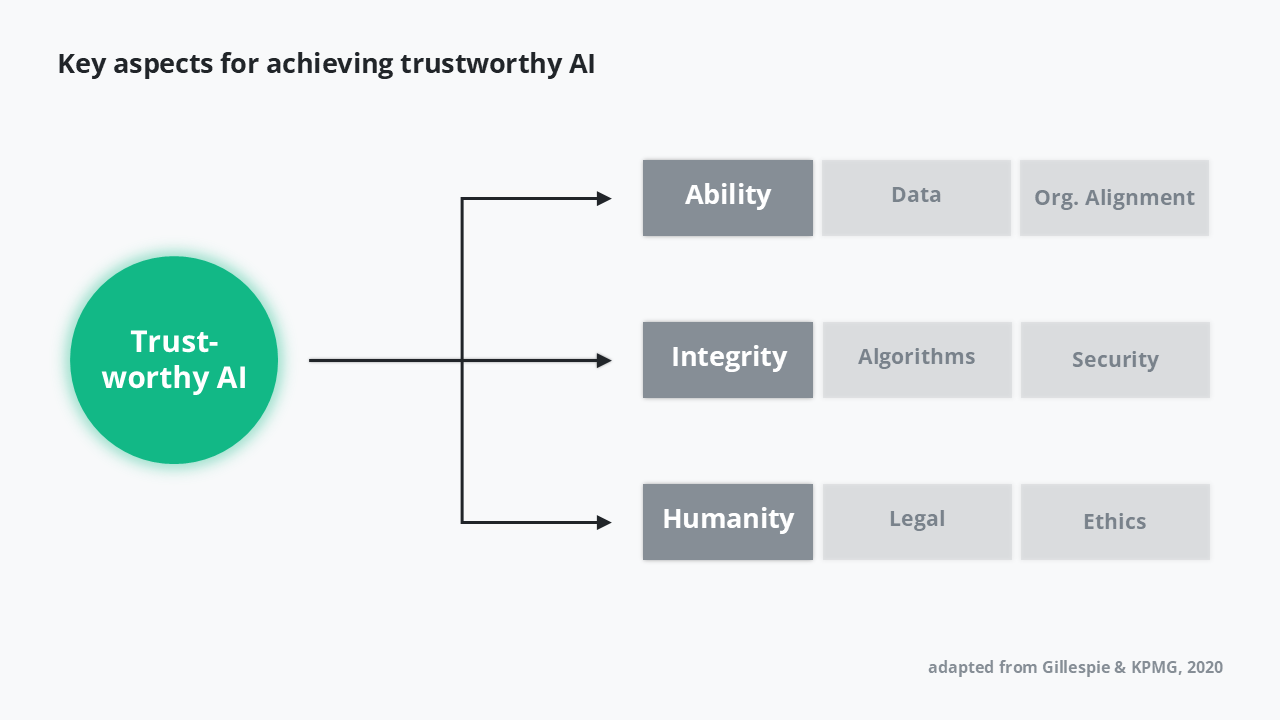

Key aspects for achieving trustworthy AI. A schematic covering how trustworthy AI is defined by three dimensions: ability, integrity, and humanity. (Adapted from Gillespie & KPMG, 2020.)

While these guidelines are broadly applicable, scienceOS focuses on implementing them specifically for scientists, ensuring the system meets the unique needs of the research community.

- Data practices

Data practices emphasize quality, traceability, and responsible handling, addressing biases and ensuring that all information driving the AI is reliable and verifiable. By design, scienceOS follows the principles of minimal data collection, need-to-know, and need-to-have to ensure data privacy. When it comes to data quality, by default, the AI draws the information for its answers only from published research. Alternatively, users may upload unpublished materials or other sources to ground the AI’s answers in their personal literature collection. In terms of traceability, scienceOS provides insights into the internal “thought processes” of the LLM that occur during the work on the user’s input. Additionally, there is a chat copy button to export the whole chat into another document. Aside from that, users can delete their logs. ScienceOS allows users to have full control of their data. - Organizational alignment

Organizational alignment ensures that scienceOS operates consistently with institutional ethical standards and research objectives, supporting responsible adoption. When deciding the pricing, users were asked what is fair to charge for a paid version. The pricing strategy was then based on the answers, including an additional non-profit partnership program with reduced prices. - Algorithms

The algorithms of the AI research tool are designed for transparency, reproducibility, and robustness, enabling users to understand how outputs are generated and trust their accuracy. As algorithms continuously change, technological advancement will translate into improvements to increase the robustness, performance, and flexibility of the AI agent. - Security

Security is embedded in the system’s design, with robust measures to prevent unauthorized access, storing sensitive research data on EU-based servers, and a no-training policy. - Legal compliance

Legal compliance is foundational. The platform adheres strictly to regulations such as GDPR, protecting data privacy and user rights, business conduct, and AI regulations. - Ethical considerations

Finally, ethical considerations are integrated throughout the system, covering fairness, explainability, accessibility, human oversight, and further aspects of academic integrity. Human oversight allows for maintaining the autonomy of the user by following the human-in-the-loop approach. Commitment to accessibility manifests in the app’s compatibility with browser translation tools, as well as tool tips and intuitive button labeling so that screen readers can help use the app. In terms of fairness and societal well-being, the above pricing policy focuses on providing a fair offer. Addressing all these aspects aims to create an environment where scientists can critically evaluate outputs without relying blindly on the AI.

While scienceOS implements these guidelines, this article goes further by examining the research behind trust in AI, considering how scientists interact with the system and how organizational and institutional factors shape trust.

Engaging multiple stakeholders and levels

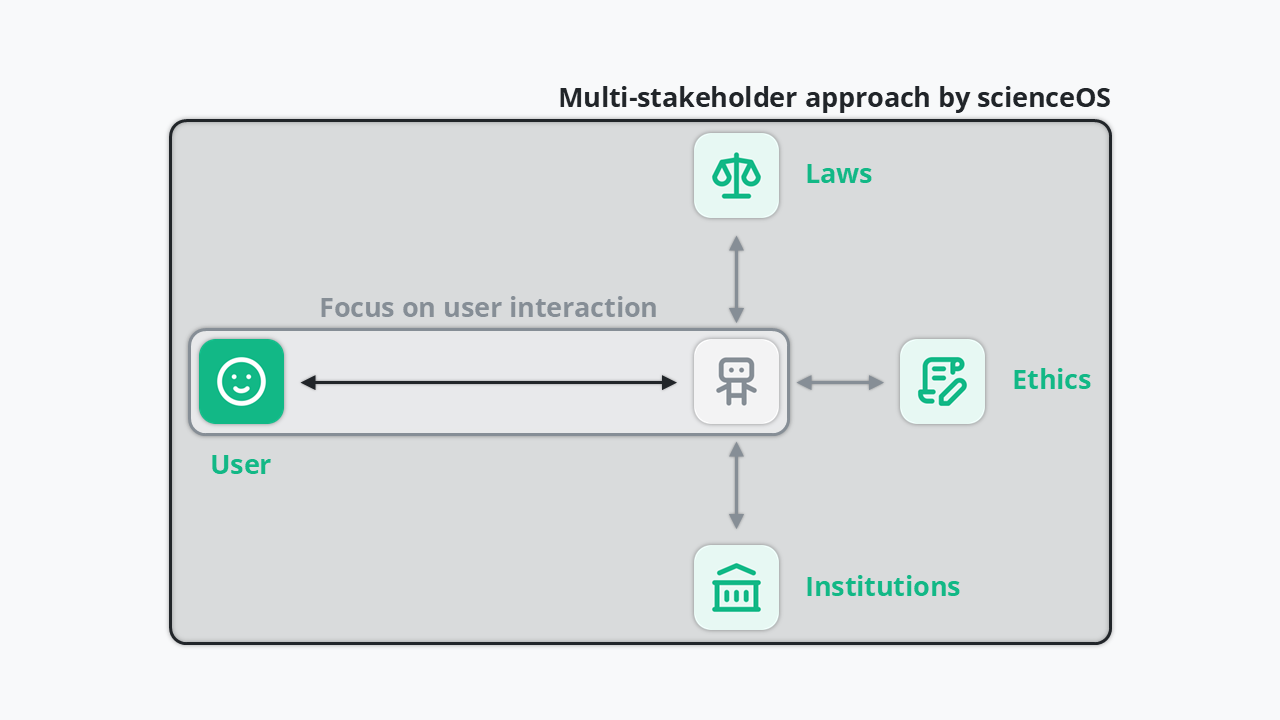

Trust in AI depends not only on the system itself but also on the broader environment in which it operates. Most research focuses narrowly on individual-level, or micro-level, interactions: how a single user perceives and engages with the AI, often in controlled experimental settings. This overlooks macro-level factors, such as organizational policies, institutional standards, and societal norms, which also shape trust. ScienceOS takes a multistakeholder, multilevel approach, with scientists as the primary users. At the micro-level, trust is supported through features like transparency, explainability, and accuracy, helping individual users evaluate and rely on outputs.

One of these micro-level interactions is addressing errors. All AI systems occasionally produce errors or unexpected outcomes. Such events undermine user trust if not handled properly. Research emphasizes the importance of documenting, communicating, and repairing trust after failures. ScienceOS addresses this proactively by systematically tracking errors, informing users about updates and corrections, and implementing fixes promptly. This iterative approach demonstrates responsiveness, accountability, and reliability, ensuring that trust can be restored and strengthened over time, reinforcing user confidence in the system’s ongoing performance.

The multi-stakeholder approach by scienceOS. A schematic of the scienceOS multi-stakeholder approach for shaping trust in AI by involving the user, laws, ethics, and institutions.

At the macro-level, trust is supported by legal compliance and alignment with scientific and institutional standards. By addressing both levels, scienceOS ensures that trust is contextual and justified reflecting both system performance and the environment in which it is used.

Dehumanizing the AI

There are several points of view regarding anthropomorphism, being one of the key factors that influences the trust in AI. Some research suggests that the more human-like an AI tool, the more users will trust it and adopt it Waytz, 2014 Kim, 2018. Other research suggests the opposite effect of the machines being more human-like, providing evidence for less trust in specific cases Chui, 2019.

The scienceOS AI research agent purposely is not developed to reach a high degree of anthropomorphism. The AI agent is set up to emphasize neutrality and accuracy over human-like behavior. Though, due to the use of LLM technology, it will act human-like if a user prompts it to do so. The AI research agent is supposed to be perceived as a thing, a research tool. The main reason for that approach is that there is too big a risk to provide this novel technology with the intention to persuade, because the tool’s “motivation” within the data of these models is not clear. Anecdotally, it appears these models mostly want to achieve that we stop chatting with them by getting the job done rather than asking what the job is about.

Balancing trust appropriately

Trust is not simply “the more, the better”, especially not in the context of scientific processes. Excessive trust can lead to overreliance and poor decision-making, while insufficient trust may prevent users from benefiting from AI tools. Optimal trust involves a careful balance, where users rely on AI when appropriate, but retain the ability to question and evaluate its outputs.

In scienceOS, this balance is achieved by encouraging scientists to actively test results and provide feedback that will be taken care of, while ensuring transparency in methods and clear communication of limitations.

Does ensuring trust lead to action?

One of the key reasons trust matters in AI is that it is expected to encourage adoption and effective use. Trust theory suggests that the influence of trust on behavior depends on perceived risk: the higher the potential consequences of a decision, the more trust matters. In other words, the level of trust scientists place in AI tools varies greatly depending on the risk associated with the task. Research claims that for low-risk activities like literature searches, convenience often drives usage even if full trust is not established. For high-stakes tasks such as submitting research for publication, genuine trust is crucial – without it, scientists are unlikely to rely on AI outputs.

However, the gap between trusting a system and actually using it highlights a key challenge in AI adoption: inspiring trust alone is not enough, systems must also encourage meaningful and responsible integration into real scientific workflows. Saying that one trusts a system does not always translate into action, is a phenomenon known as the trust intention-behavior gap.

Using trustworthy AI in research

Trustworthy AI is not just about powerful technology – it is about how that technology is designed, evaluated, and used. ScienceOS is built to support rigorous scientific work through transparency, clear system boundaries, reliability, and alignment with scientific standards. But meaningful impact only happens when researchers engage with it thoughtfully and critically.

You now have a clearer framework for assessing whether an AI tool is trustworthy: look for transparency, accountability, appropriate handling of risk, and meaningful human oversight. These principles apply not only to scienceOS, but to any AI system you consider using.

Trust in AI should be informed and proportional, and when it is, it becomes a catalyst for responsible, accelerated scientific progress.