Trust is commonly defined as a willingness to be vulnerable based on expectations of competence, integrity, and benevolence Svare, 2020 Baghramian, 2020. Since this definition emerged from research on human-to-human relationships, applying it to AI requires careful consideration McGrath, 2024.

Unlike human-to-human trust, human-AI trust depends less on social cues or shared relationships and more on system performance, transparency, ethical design, and the context in which the system is used.

Decades of research on interpersonal trust show that trust is shaped by multiple factors, including reputation, contextual influences, and perceived reliability. A large meta-analysis of human-to-human trust highlights how strongly these contextual and relational dimensions affect whether people choose to rely on others Hancock, 2023. Although AI systems do not possess intentions or social motivations in the human sense, these findings remind us that trust is never one-dimensional. It is shaped by expectations, perceived risk, and the surrounding environment.

Measuring and understanding trust

Measuring trust in AI is therefore complex, and even more so in niche cases like researchers and their trust in AI. Simple metrics often fail to capture how researchers actually interact with AI tools in real scientific workflows. At scienceOS, trust is approached as a multi-dimensional and context-dependent phenomenon. We consider not only technical system qualities, but also how scientists perceive risk, evaluate outputs, and decide whether to rely on them in practice.

This article presents findings from an internal survey with 53 users of scienceOS conducted between November 2025 and January 2026. The aim of this research was to examine how perceived trust and perceived risk vary across different scientific tasks – and what these patterns reveal about designing AI systems that scientists can confidently and responsibly use.

Surveying researchers’ trust in AI

Scientists, in particular, approach AI, as well as many other topics, with a high degree of skepticism and critical thinking, shaped by their analytical mindset and reliance on evidence. Their trust cannot be assumed; it must be earned through demonstrable competence, transparency, and alignment with scientific standards.

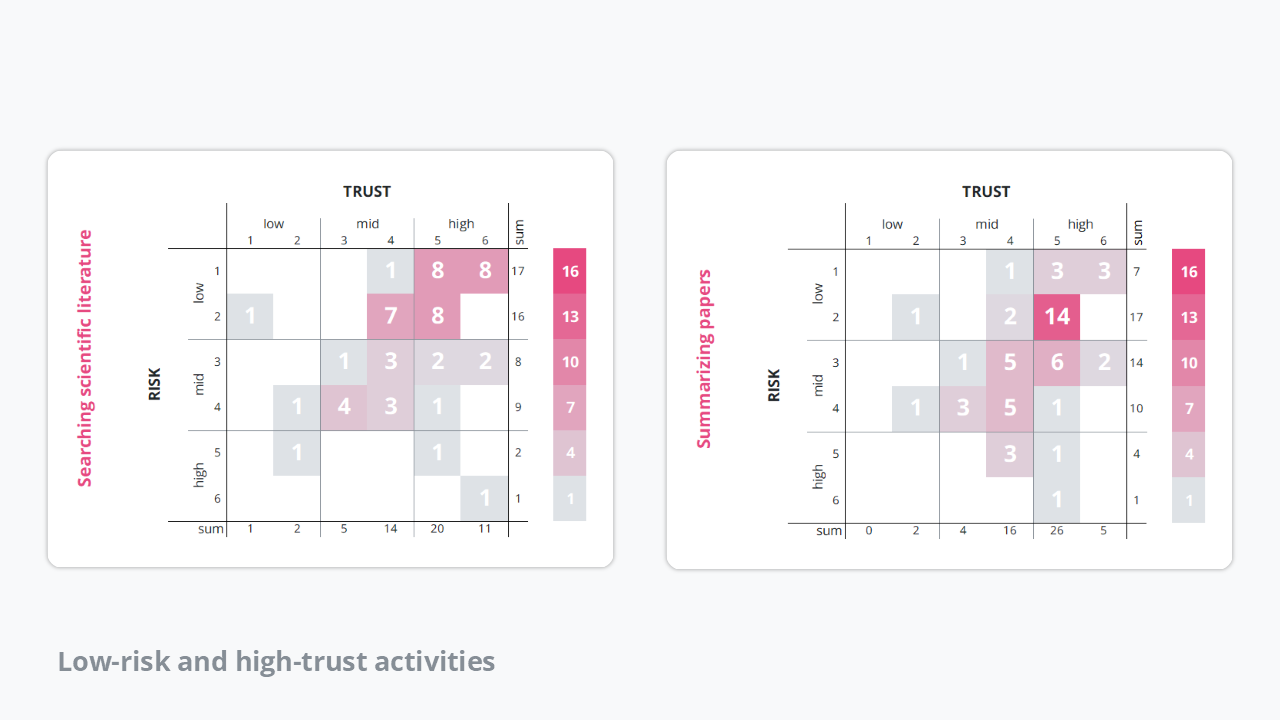

Previous research has established that the requirement of trust in AI varies with perceived risk. To test whether this holds true for users of scienceOS, the survey asked participants to rate how much they trust scienceOS for specific tasks and how risky they perceive those tasks to be, both on a scale from 1 (do not trust at all / not risky at all) to 6 (fully trust / very risky). The results support the established pattern: for low-risk tasks, scientists may use AI for convenience, even if trust is limited; particularly for searching scientific literature and summarizing scientific papers, tasks which were consistently rated as low-risk and high-trust activities.

Low-risk and high-trust activities. The heatmaps indicate that low-risk scientific tasks (searching for scientific literature and summarizing research papers) correlate with high trust in AI tools.

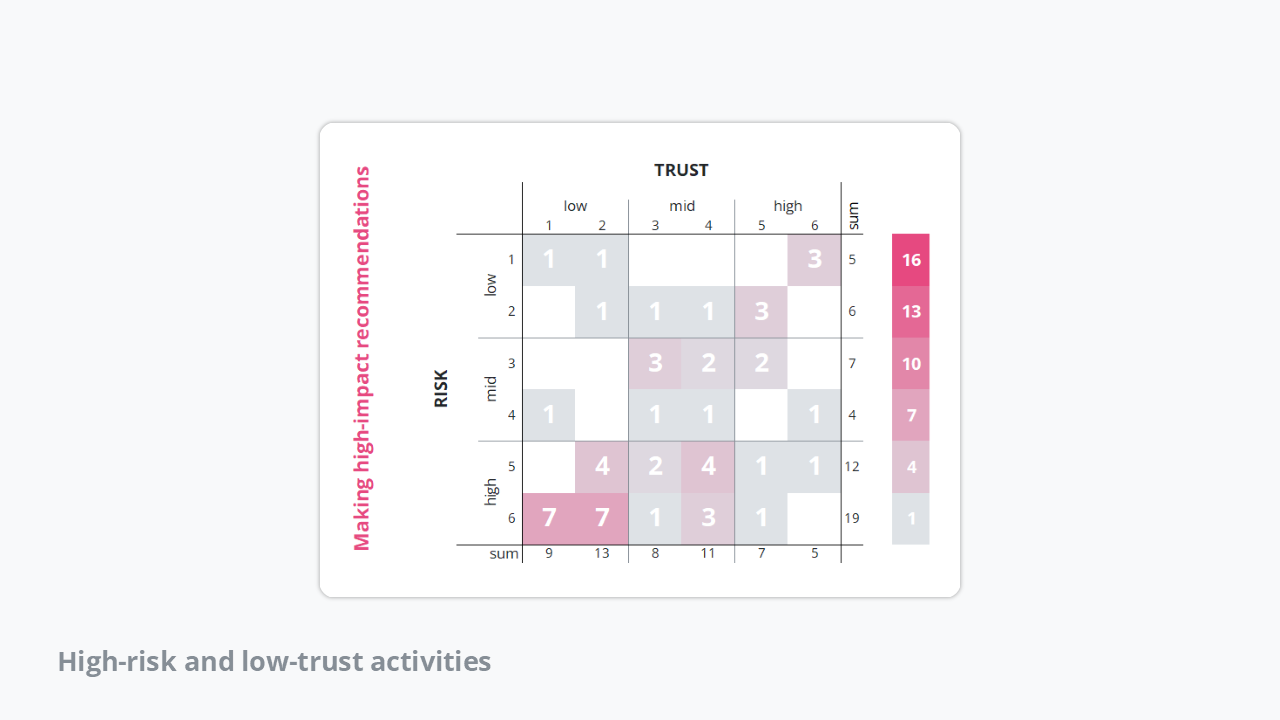

Similarly, tasks such as making high-impact recommendations (e.g., clinical decisions) were perceived as high-risk and correspondingly received less trust, further confirming the relationship between perceived risk and trust.

High-risk and low-trust activities. The heatmap provides evidence that high-risk scientific tasks (making high-impact recommendations) is associated with low trust in AI tools.

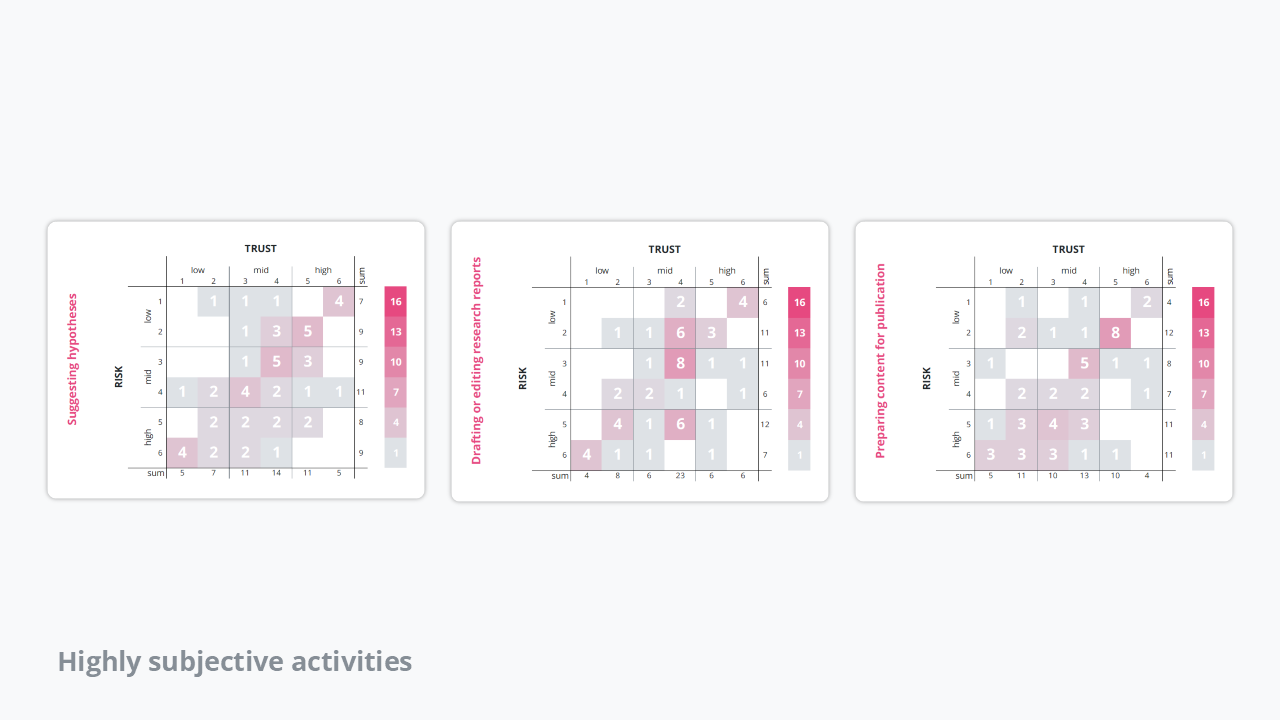

However, not all tasks showed a clear-cut relationship between perceived risk and trust. For example, when it comes to suggesting hypotheses and drafting or editing research reports, the trust levels among users were more or less evenly distributed. Some users considered these tasks risky, while others did not. Interestingly, most respondents rated their trust at level 4, indicating a generally high – but not unanimous – level of trust.

This distribution suggests that trust in AI for these more interpretive or creative tasks is more subjective and context-dependent compared to more straightforward or high-impact tasks. Factors such as individual experience, the specific research area, years of research experience, or the perceived consequences of errors may influence how risky and trustworthy users perceive these tasks to be.

Highly subjective activities. The heatmaps suggest that, for more interpretive and creative scientific tasks (suggesting hypotheses, drafting or editing research reports, and preparing content for publication), perception of risk and trust is more subjective among scientists.

The data regarding preparing content for publication contained surprising insights. Both trust and perceived risk show a wide range of opinions among users of scienceOS. Trust levels are fairly evenly distributed across the scale, with many users expressing moderate to high trust (3 to 5), but no clear consensus. Similarly, perceived risk ratings are divided – while a significant number of users consider this task low risk, an almost equal portion views it as quite risky.

This spread suggests that users of scienceOS have mixed feelings about AI’s role in preparing publication-ready content. Some may feel comfortable relying on AI for tasks like formatting or language polishing, while others remain cautious, concerned about potential errors or nuances that could impact the final published work. Overall, this highlights the subjective and context-dependent nature of trust and risk in more complex, high-stakes AI-assisted tasks.

Task-dependent calibration of trust

The findings of the survey reveal that trust in AI varies significantly depending on the scientific task. For example, tasks like literature searching are generally seen as low risk and receive broad trust, while more complex activities such as preparing content for publication create mixed opinions among users of scienceOS. This confirms that trust in AI is subjective and depends on the specific task and individual perspectives.

Because of these differences, scientists should carefully and intentionally evaluate AI tools based on the risk level of each task – being more cautious with high-risk uses while feeling comfortable using AI for lower-risk support tasks. It is also important to be transparent about AI’s role in your work and to use clear disclaimers when relying on content created with AI support. By doing this, researchers can make responsible decisions that improve their work, and developers can build AI systems that are reliable, ethical, and trustworthy.